Our team build has been flaky lately with several unit tests that fail intermittently (and most often only on the build server). I was getting fed up with a build that was broken most of the time so I decided to see if I could figure out why the tests fail.

Working on the premise that the intermittent failure was either multiple tests stepping on each other’s toes or timing related. I decided the easier one to figure out would be the timing issue.

To create a new load test:

1) Select “Test –> New Test” and select “Load Test” from the “Add New Test” dialog

2) You will be presented with a Wizard that will help you build a load test. I don’t know what each setting means, but for the most part I left the settings at their defaults. I’ve noted the exceptions below.

a) Welcome

b) Scenario

c) Load Pattern

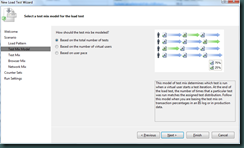

d) Test Mix Model

e) Test Mix

The Test Mix step allows you to select which tests should be included in the load test. For my purposes, I selected the single test that was failing intermittently. If you select multiple tests this step allows you to manage the distribution of each test within the load.

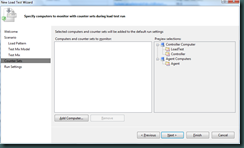

f) Counter Sets

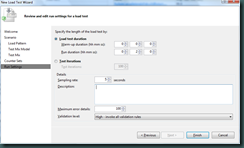

g) Run Settings

Finally, you are able to manage the Warm up duration and Run duration. Warm up duration is used to “warm up” the environment. During this time the load test controller simply runs the tests but does not include their results in the load test. The Run duration represents how long the load test controller will run the mix of tests selected in the “Test Mix” step.

Once you click Finish, you will be presented with a view of the load test you’ve just created. You can now click on the “Run test” button to execute the load test.

Gotcha: The first time I tried to do a load test it complained that it couldn’t connect to the database. You can resolve this by running the following SQL script and then going into Visual Studio and selecting Test –> Administer Test Controllers… This will bring up the following dialog.

The only thing you need to configure in this dialog is the database connection (the script will create a database called “LoadTest”) so in my case the connection string looked like this:

Data Source=(local);Initial Catalog=LoadTest;Integrated Security=True

Now that you have the load test created, you are ready to run it. Click the “Run test” button on the load test window (shown below).

While the load test is running, Visual Studio will show you 4 graphs that give you information about the current test run. You can play with the option checkboxes to show/hide different metrics.

In my case, as the load test ran the unit test I’d selected started to fail. This at least showed me that I could reproduce the failure on my local machine now.

Next I added a bit of code to the unit test prior to the assertion that was failing. Instead of doing the assertion I added code to check for the failure condition and called Debugger.Break().

I ran the test again, but this time as I hit the Debugger.Break calls I could step through the code very well because the load test was running 25 simultaneous threads (simulating 25 users). I dug around a bit and found that you can change the number of simultaneous users. You can adjust the setting by selecting the “Constant Load Pattern” node and adjusting the “Constant User Count” in the Properties window.

I ran the test again and this time I was able to debug the test and figure out what was wrong.

Overall using the load test tool in Visual Studio was quite easy to set up and get going. Other than the requirement of a database (which is used to store the load test results) it was very easy to set up.

No comments:

Post a Comment